Search should understand content

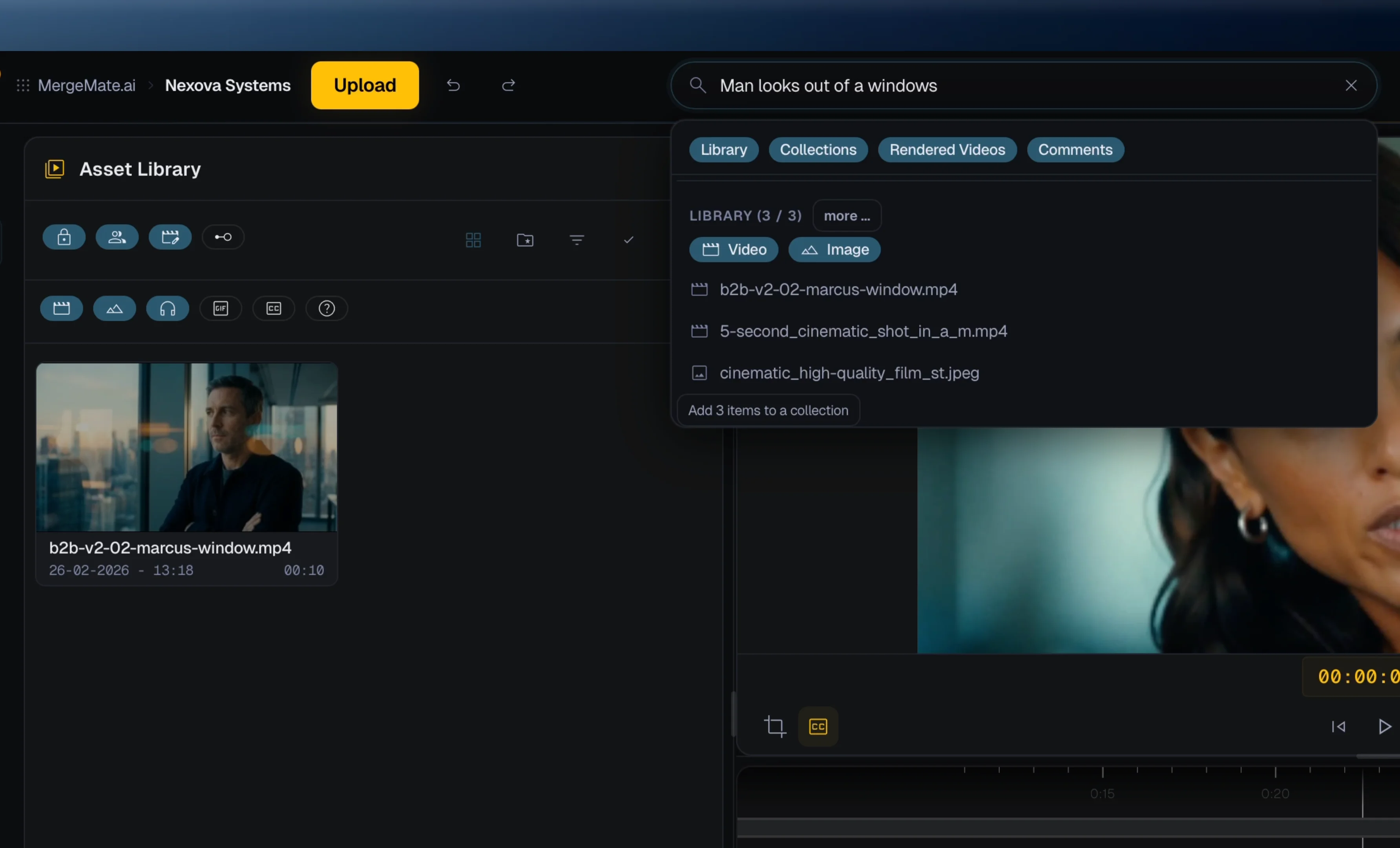

A useful AI search layer can connect visual content, spoken words, metadata, and project decisions. That means a team can look for a topic, mood, scene, person, or production need instead of guessing a filename.

Video teams spend too much time searching. MergeMate.ai is designed around project-aware search so teams can find footage, generated media, transcript moments, and review context by describing what they need.

A useful AI search layer can connect visual content, spoken words, metadata, and project decisions. That means a team can look for a topic, mood, scene, person, or production need instead of guessing a filename.

Modern productions mix footage, AI-generated B-roll, stills, audio, voiceovers, and subtitles. Search works best when all of those assets live in the same production context.

The point is not just finding a file. The point is turning found material into edits, rough cuts, review versions, and platform-specific exports faster.

AI video search

Search engines and AI answer engines reward clear, specific pages that answer one intent well. This page connects that intent to MergeMate.ai's core idea: Mergi as a project-aware production partner for teams working with real footage, generative models, review, and delivery.

Teams should be able to search for transcript topics, visual descriptions, project assets, generated outputs, and production status depending on what is available in the project.

Folder search depends on manual naming. AI video search can use semantic context from visual content, transcripts, and project memory.

MergeMate.ai is built by founders combining 25+ years of professional film production with software architecture for AI orchestration, collaboration, and cloud workflows.

By Thomas Fenkart — 25+ years in professional video production · Last updated: May 2026

MergeMate is in Early Access. We're not looking for beta testers — we're looking for co-builders. Get in now, shape what it becomes, and pay a lot less than everyone who waits.